I think one of my best ideas was “vocable synthesis”, which my friend Lucy just reminded me of. I wrote … More

Author: Alex McLean

Alpaca Festival recap

Here’s a lovely video by Eva Yap, as a three-minute journey through Alpaca Festival 2025. It was a ride!

When was TidalCycles born?

I didn’t set out to make Tidal, it emerged over an extended period of time, through my creative practice. Still, … More

Alpaca Festival and Conference 2025

Alpaca 2025 is a festival and conference exploring Algorithmic Patterns in the Creative Arts. Lucy Cheesman, Ray Morrison, Hazel Ryan … More

Pattern Thinking Across Domains

Justin Pickard interviewed me (Alex McLean) for the FoAM Anarchive: Justin brought out some nice themes from our chats, love … More

Konnakol, drum kit and code

This is a big one for me – an upcoming collaboration with B C Manjunath and Matt Davies, exploring carnatic … More

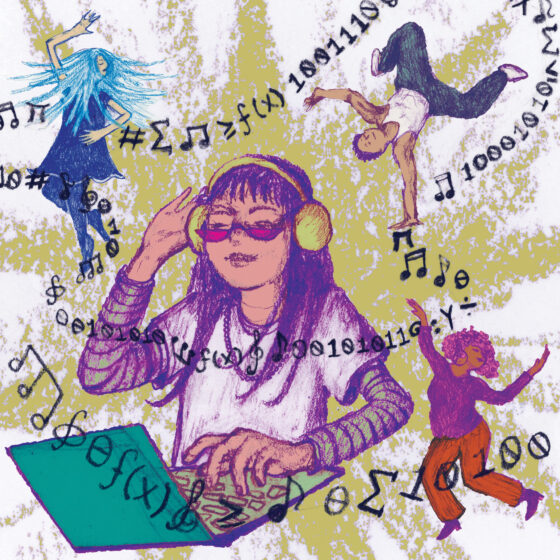

Algoraves are here right now

I just happened on a pandemic-era article about algorave from 2022. It’s really nicely written and researched, and I especially … More

Following instructions

I once went to a workshop run by the poet John Hegley, he got us making poetry booklets out of … More

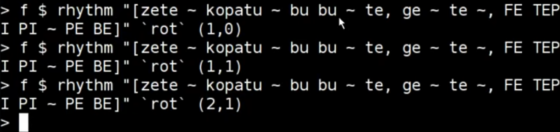

Tidal – a history in types

Some notes from the embedded conference “working out situated universality” hosted by Julian Rohrhuber at IMM Duesseldorf. If you’re reading … More

Modulating Time

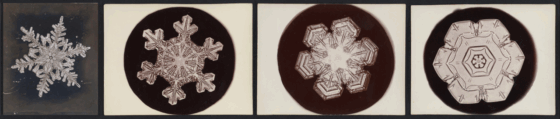

I’m just back from a productive week working with Mika Satomi and Lizzie Wilson on a mini project ‘Modulating Time’ … More