I’m off to the RMA study day on “Embodied Research Methods in Music& Sound” in Liverpool on Tues 18th May. … More

Author: Alex McLean

Good old internet

I stopped using ‘social media’ for a while now for the usual reasons — it’s an inhumane way of interacting … More

Textile dimensions

Lately I’ve been exploring 8-shaft weaving trying some things inspired by Laura Devendorf, focussing on ‘crackle weave’ using the AdaCAD … More

Order and tension

After my previous warping catastrophe, I started again with some fresh yarn (I’m not sure if I’ll be able to … More

Warping catastrophe

I’ve made the below crackle weave design in AdaCAD, but have run out of ‘warp’ threads on my loom, and … More

Ecclesall road co-op freezers

A story about how some nice sounding freezers in Sheffield hit international news.

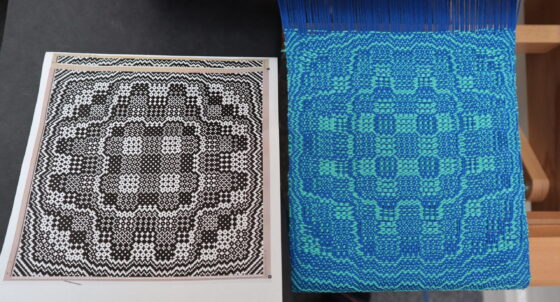

More crackle weave

I’ve been having fun trying to understand crackle weave more, after weaving one of Ralph Griswold’s algorithmically generated patterns. It’s … More

We love repetition

I’ve used some samples of different speech synth voices saying “algorave generation, we love repetition” for a while. It’s a … More

Bitfield weaving

Continuing the weaving theme I was happy to see that Laura Devendorf has not only included the ‘bitfield’ feature I … More

Exploring 8 shaft weaving

After a bit of time off from weaving after the PENELOPE project, it was great to visit Kristina Andersen, Pei-Ying … More